Disclaimer: I ve known Prashant for over 7 years and have worked closely on many projects. Biases are natural but I will try to keep this article as objective as possible. Context for the book - With 2 years of Covid-19 behind us we are now living in a world very different from what used… Continue reading Book Review – Future of Work: Resilient Growth Principles

Using AWS SAM default template in PyCharm: Python Version Error

If you are using Pycharm default template to create your AWS serverless application, you may have run into the following issue. docker ps /usr/local/bin/sam build HelloWorldFunction --template /Users/appc/PycharmProjects/workspacesskill/template.yaml --build-dir /Users/appc/PycharmProjects/workspacesskill/.aws-sam/build Building codeuri: /Users/appc/PycharmProjects/workspacesskill/hello_world runtime: python3.6 metadata: {} architecture: x86_64 functions: ['HelloWorldFunction'] Build Failed Error: PythonPipBuilder:Validation - Binary validation failed for python, searched for python in… Continue reading Using AWS SAM default template in PyCharm: Python Version Error

Book Review: The Basics of Bitcoins and Blockchains

The Basics of Bitcoins and Blockchains: An Introduction to Cryptocurrencies and the Technology that Powers Them by Antony LewisMy rating: 5 of 5 starsThe is the best investment of my 10 hours and $10 dollars this week. This is such a great introduction to the whole crypto ecosystem. After hours of YouTube and dozens of… Continue reading Book Review: The Basics of Bitcoins and Blockchains

Book Review: The Spy and the Traitor

The Spy and the Traitor: The Greatest Espionage Story of the Cold War by Ben MacintyreMy rating: 5 of 5 stars10 x (James Bond movies) = This one book.James Bond is a fictional British spy. But Oleg Gordievsky is real. His story is way more entertaining, eventful and fascinating.The book references several events in the… Continue reading Book Review: The Spy and the Traitor

Book Review: The Almanack

The Almanack of Naval Ravikant: A Guide to Wealth and Happiness by Eric JorgensonMy rating: 2 of 5 stars I don’t know what to make of this book 😦 I don’t even know whether I am supposed to be entertained or educated. Maybe both. Is this supposed to be a business book or a philosophy… Continue reading Book Review: The Almanack

Book Review: Blood and Oil

Blood and Oil: Mohammed bin Salman's Ruthless Quest for Global Power by Bradley HopeMy rating: 3 of 5 stars “No dynasty lasts more than 3 generations” – Arabic saying. This is exactly what MBS (Mohammad Bin Salman) is out to change. There are two things that Saudi Sheikhs can’t resist. Wallowing in the opulence afforded… Continue reading Book Review: Blood and Oil

Pothan’s Obsession with Everyday Things

Note 1: If you are not a Malayalam movie buff, I recommend you skip this post. Malayalam cinema is undergoing a radical change and Pothan team is its harbinger. What amazes me about Pothan is obsession with making movies revolving around seemingly ordinary, everyday things.Maheshinte Prathikaram starts with a classic "blue chappal"! Something every Malayali… Continue reading Pothan’s Obsession with Everyday Things

Interesting thought!

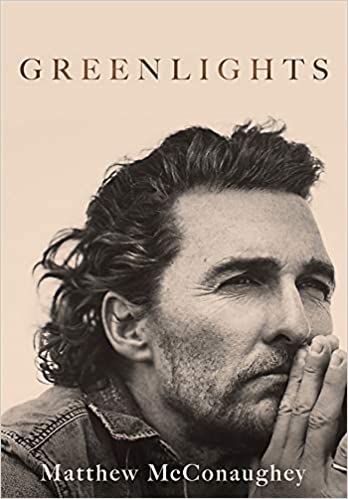

Book Review: Greenlights by Matthew McConaughey

Greenlights by Matthew McConaugheyMy rating: 3 of 5 stars They say everything is bigger in Texas. I say even Texas is not big enough for McConaughey and his ideals. This is the story of a Southern boy pouring out 35 years of his view of life into what he calls as an “approach book”.This is… Continue reading Book Review: Greenlights by Matthew McConaughey

Book Review: Why We Sleep

Why We Sleep: Unlocking the Power of Sleep and Dreams by Matthew WalkerMy rating: 4 of 5 starsWhen I was grappling with a new idea, my manager told me “Just, sleep on it’.When I was sick, my mother told me “Get some sleep, you ll be fine tomorrow”.When I had an important presentation the next… Continue reading Book Review: Why We Sleep